The BBC recently ran a news item describing how an airliner got into difficulties because of a software flaw. On closer examination, it’s probably more precise to say that an airline pilot got into difficulties because some people interpret the meaning of the title “Miss” differently to others, but that’s not as snappy, and doesn’t follow the usual narrative of strangely placing human blame in the laps of machines.

The story described how a Tui jet took off with insufficient thrust because the calculations of total passenger weight were under in the order of 1.2 metric tonnes. The cause of this miscalculation was apparently because the software that calculated the total plane weight was written by programmers who lived in a country where it was assumed that “Miss” meant a female child and “Ms” meant an female adult. As a result a number of the female passengers we given an assumed average weight of about half of what their average weight probably was.

As I launch into an exercise in my organisation to improve the maturity of how we manage, process and analyse data, it’s a really straightforward example of what can happen if there isn’t agreement about what things mean. It’s also a tiny scratch on the surface of the ways in which tiny flaws in data can have huge and significant consequences as we increasingly rely on algorithms that will assume data is correct and of consistent meaning.

The homes in which we live are becoming increasingly connected. But even with little or no smart technology, small streams of data can be remarkably intrusive in the ways in which they can draw assumptions about what we do in our homes.

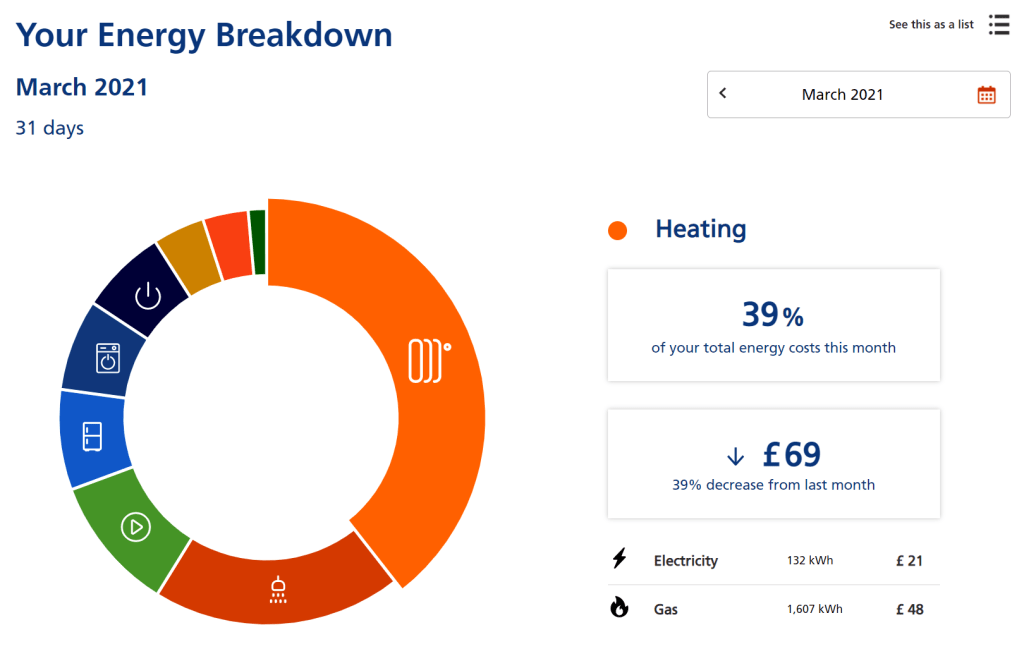

For example, my current energy company, from getting data from my smart meters every 30 minutes, can give this sort of breakdown of what we are doing in our home:

Let’s just think about that for a moment. From merely the data that they get on half-hourly intervals about the consumption rate of electricity and gas in my home, a bit of profiling about the size of my house and household, and weather data through data analysis they can estimate how much we are using on heating, hot water, home entertainment, fridges and freezers, laundry, lighting and cooking. As they put it:

That’s powerful stuff. I also have no idea if it is in any way right. And if my energy supply company decided that they would start to adjust tariffs to reflect different types of energy consumption, I’d be in no position to counter their algorithms if I believed that they were charging me incorrectly. I’m also not sure I want my energy supply company knowing how much time we spend watching TV…

The complete trust we are starting to place into algorithms, and increasingly ones that are learned by the machine rather than directly crafted by programmers, is actually a complete trust in the data on which these algorithms are trained and then operate.

And therein lies a huge risk for us all. Algorithms express what happen in the future based on the data that describes the past. If that data is based on bad assumptions, bad things can happen (as Tui Airlines almost found out). If that data is biased because it reflects a biased society which excludes certain types of people, those biased will be reinforced and baked into black boxes of code which often not even the programmers can adequately explain.

As we stand at the precipice of “smart” and “connected” being embedded by default into so many things that previously were not smart and disconnected, we need frameworks to help us make decisions about what we should and shouldn’t do. The Silicon Valley culture of not understanding that just because you can, you should is not something that is to be desired. We need robust ways to know when we shouldn’t, and then we should act in that way.

There are a few great references to start this work. Cathy O’Neil’s Weapons of Math Destruction is a great explainer of how algorithms can reinforce problems rather than solve them. Caroline Criado-Perez Invisible Women is encyclopaedic in its exploration of how much of the world has been built around the assumption of the user being male. The Netflix movie Coded Bias explores more, particularly looking at the ways in which facial recognition causes problems for many groups. Carl Bergstrom & Jevin West’s Calling Bullshit examines data, algorithms and statistics from a number of dimensions.

I’m hoping to collaborate with a few other organisations on this, and will keep a commentary of what we do as we go. Ultimately I’m expecting that we’ll end up with a simple framework that will allow our people to be able to make decisions when they are looking to create or collect new data, look to keep or destroy existing data, and also when they are looking to acquire new technologies, particularly those that explore the use of Artificial Intelligence, Machine Learning or predictive analytics.

One thought on “Data and ethics”