There are some amazing developments emerging into the mainstream as a result of technologies badged variously as Artificial Intelligence, Machine Learning, Robotics, Natural Language Processing and other whizzy-sounding titles. But alongside any new wave of technology comes a tsunami of marketing hype, overblown expectations, silver bullets and rainbow-pooping unicorns.

Non-technologists and technologists alike need to be able to sift the useful or potentially useful from the snake oil. Here are six questions that might help you on your way.

1 Why is this wave of automation any different to those that have come before?

It seems that we are currently surrounded by media coverage that tells us that these emerging technologies are about to take our jobs. Depending on your job, that might, over time, well be the case. But why is that any different, or indeed any more scary, than the repeated waves of automation that have occurred since the dawn of the industrial age in the 18th Century?

Manual labour became increasingly replaced by machines (much to the consternation of the Luddites and the Chartists). But this wave is about knowledge work! you may cry. But office activities are just as experienced in being adapted by automation as those on the factory floor or field. Telephonists, typists, filing clerks have all fallen by the wayside with the rise of information technology. and yet we are all still finding plenty of work to do.

Automation creates new types of work. Rather than worrying about whether existing jobs will be sustained or not, we should instead focus on what the new jobs should be. Will they be centaur-like roles, augmented by the powerful new technologies at our disposal? Or will they by subservient roles, tending and feeding the machines and doing tasks that aren’t necessarily playing to our strengths but rather just too fiddly or expensive to be automated?

2 Are you being suckered by anthropomorphism?

Most of the waves of automation that have come before have been particularly machine-like. They have looked like machines. They have sounded like machines. They have been given machine-like names.

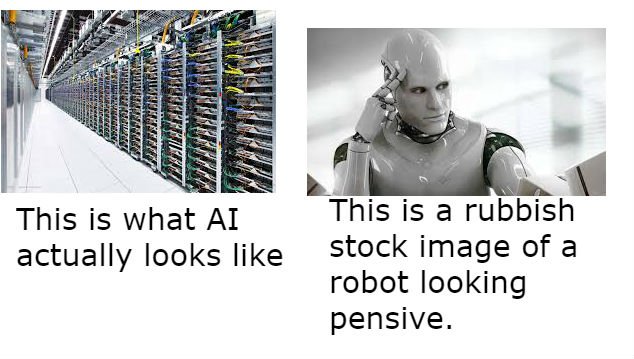

This time around we have things that are being made to look or sound like us. We give them names like “Ross” or “Watson”, “Siri” or “Alexa”. We “converse” with them. We represent them in the media with human-like pictures.

Anthropomorphising these technologies plays into a particularly vain human trait – we think things that look a bit like us are actually like us. Is the scariness of this wave of automation merely because marketing departments are playing on that vanity?

3 How big is your training data?

Almost all of these automation technologies are based on principles of the statistical analysis of enormous quantities of data. If someone is promising that you will gain intelligent insight from your own data, you need to questions about how much information is required to adequately train the machines.

By way of context, one of the most familiar examples of how modern techniques have enabled useful services is in the field of statistical language translation. Services like Google Translate have applied data analytics to “solve” a problem that linguists couldn’t. How much data? Well, to achieve reasonable translation between a pair of languages, something in the order of 2.5 billion words are required. How big is that? About 37,000 average-length novels. That’s a lot of words.

If you don’t have that kind of volume of data, how are the machines that are promising magic to be trained? If it’s with external data sets, will those have relevance into your own organisation?

4 Is understanding causation more important than just identifying correlation?

Not only are these modern techniques based on statistical analysis, but they also rely heavily on finding statistical correlations without necessarily understanding issues of causality.

If you don’t understand why that might be important, then look at this chart (taken from the awesome site http://www.tylervigen.com/spurious-correlations)

I’m a sociologist. That chart shows undoubtedly that sociology is rocket science. Stick that in your pipe and smoke it, Maureen Lipman.

Now sometimes understanding causality isn’t important. But basing systems and processes on AI that only worries about correlation will be great until the correlation no longer holds true. Tim Harford wrote a great article explaining this a few years ago.

5 How will you get the people to change their behaviours?

Technology doesn’t change the world. Humans do. How we act, and how we behave. New technologies won’t magically shift human behaviours.

In particular, many of the claims about AI technologies are based on the idea that they will provide insight that will lead to better human decision making. But human decision-making isn’t something that is hard wired to logic – we have a series of psychological biases that help us to make sense of the world around us. One in particular – confirmation bias – means that we seek out information to validate our gut feel. How will an AI Insight machine address such biases?

6 Is it a clock or is it a cloud?

I’m a big fan of metaphor, and one of my favourites is that of Karl Popper who described the world as being divided into things that are like clocks and things that are like clouds.

Clocks are possibly complicated, but they are knowable. They are bounded. They are repeatable. If one goes wrong then you can gather data until you eventually can diagnose the issue and fix or replace. Clocks are the world of “best practice”. Clock-like approaches are deployed when you are dealing with familiar problems.

However, much of the world is like clouds. Clouds are chaotic, complex. When you reach out into a cloud it changes its shape around you. Clouds are the unknown.

New emerging technologies are cloud-like (not, I hasten to add, to the confused with The Cloud which is something completely different). Nobody knows with any certainty how a new approach with a new technology will impact an organisation. It might do what you thought. It might not. It might result in totally different things happening. Until you try, you won’t know.

Clouds are agile, clocks are waterfall. Clocks are hard business cases with knowable ROI. Clouds are much more speculative. Most of the emerging technologies we are seeing hyped today are cloud-like.