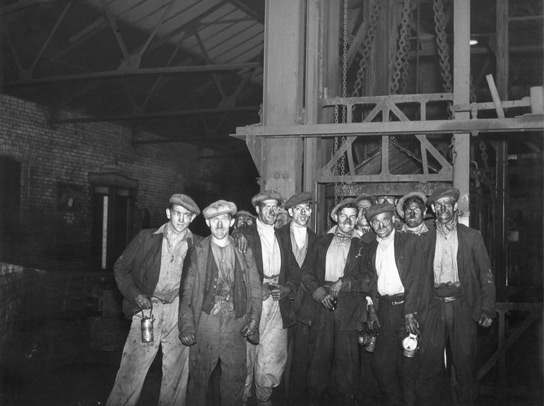

In the post-war period in Britain scientists Eric Trist, Ken Bamforth and Fred Emery conducted research into a strange thing that was going on in the coal mining industry at the time.

Having survived the ravages of the global conflict, the mining industry was investing heavily to bring it into the modern age. New state-of-the-art machinery was deployed, but productivity declined. Pay and conditions were improved, yet absenteeism rose. The logical outcomes being expected from logical actions were instead producing unpredictable results.

What Trist, Bamforth and Emery concluded was that whilst some logical actions led to logical outcomes, when you had both people and technology involved it was rare that you would find everything occurring in a nice, neat cause and effect way. If you addressed the people factors (the “socio”) or the engineering (the “technical”) in isolation, you’d probably not get the results you were looking for.

I’m no great expert in the theory of socio-technical systems thinking, but it seems to me to be an eminently sensible approach. And one that, sixty years after it was first articulated, we still seem to ignore on a regular basis.

I was reminded of all of this when reading the most recent post from Hal Berenson, a blogger and former distinguished engineer at Microsoft. The piece gives an interesting take on some of the challenges that Microsoft face in light of Windows 8, but what I found particularly telling was Hal’s early challenge to the idea of Windows 8 being referred to as the “new Windows Vista”, namely:

“The problem I have with the Windows 8 as Windows Vista analogy are the quality problems that Vista had. It just didn’t work. That’s not a problem Windows 8 had, so I really don’t like the comparison. For me its a visceral thing.”

I completely understand where Berenson is coming from: Vista was a buggy product that didn’t work very well because the code wasn’t up to a basic level; the engineering quality of Windows 8, however, is very high. I also think his analysis probably highlights many of the problems that have led Windows 8 to not be the rip-roaring success that Redmond hoped it would be.

However, it strikes me as extremely worrying that that a piece of technology can be described as “working ” if it meets engineering standards of functionality, irrespective of the outcomes. This is the engineer’s fallacy – that the logical best solution is the best solution. The sort of thinking that led to (in a hackneyed old example) VHS triumphing over BetaMax.

The quality of a product that is to be used by humans surely cannot be assessed on engineering grounds alone? That might sound more than a little pedantic, but my view is that teams that start with the view that engineering perfection is in some way separate from overall user experience outcome are ones that are setting themselves up to fail. And in fact, if it’s useful and usable, we’ll actually put up with quite a lot of engineering imperfection…

2 thoughts on “The engineer’s fallacy”